An expert on how data and algorithms are changing work responds to Janelle Shane’s “The Skeleton Crew.”

“The Skeleton Crew” asks us to consider two questions. The first is an interesting twist on an age-old thought experiment. But the second is more complicated, because the story invites us to become aware of a very real phenomenon and to consider what, if anything, should be done about the way the world is working for some people.

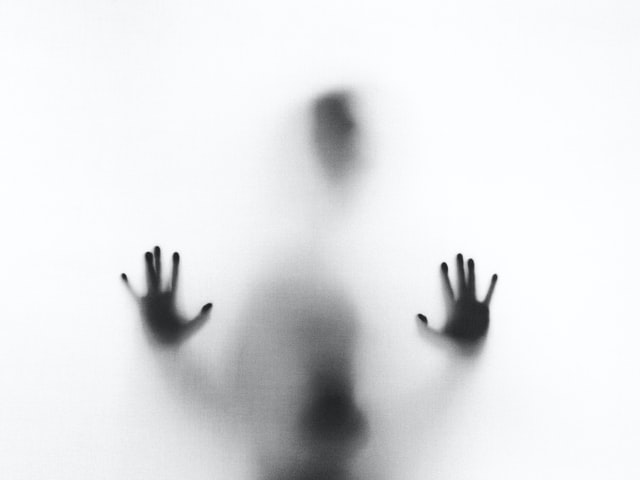

The first question explores what it would mean if our machines, robots, and now artificial intelligences had feelings the way we do. (Recall the Haley Joel Osment child A.I. that was created to suffer an unending love for its human mother while society dies around it.) “The Skeleton Crew” offers an interesting twist because the A.I. indeed has feelings just like us, because it is, in fact, us: The A.I. is a group of remote workers faking the operations of a haunted house to make it seem automated and intelligent.

It’s a fun take on the trope. That the A.I. actually is real people with real feelings underscores the villainy, heroism, or oblivious indifference of other characters around them. The villains interact with the A.I. in murderous ways, and their fear of it is their ultimate downfall. The badass damsel in distress graciously thanks the A.I. for saving her life before she knows that it’s humans. The billionaire is oblivious to the actual workings of this world he’s created, whether it is shoddy A.I. or real people, and he ghosts as soon as his moneymaking is in question. Interestingly, the crowds of people who go through the haunted house seem most interested in seeing whether they can break the A.I. and prove it’s not actually intelligent (recall the Microsoft Tay release). Perhaps this represents our human bravado, wanting to prove we’re a little harder to replace than A.I. tech companies think we are.

The second question, less familiar and comfortable, is queued up when Bud Crack, the elderly Filipino remote team manager, says to his team: “I’m trying to explain things to them. What we are. They’re confused.”

Before “they”—those operating in expected, visible roles in society—can offer any kind of assistance, they need to wrap their minds around the very existence of remote workers faking the operations of A.I. In this case, the 911 operator has to get from her belief that “the House of A.I. is run by an advanced artificial intelligence” to a new understanding that there is a frantic remote worker in New Zealand who has been remotely controlling the plastic Closet Skeleton in the House of A.I. and is now the only person in the world with (remote) eyes on a dangerous situation.

This fictional moment mirrors an actual reality that is detailed in the award-winning book Ghost Work by Mary Gray, an anthropologist at Microsoft Research and a 2020 MacArthur Fellow, and Siddharth Suri, a computer scientist at Microsoft Research. Ghost work refers to actual, in-the-flesh human beings sitting in their homes doing actual paid work to make A.I. systems run. Most machine learning models today use supervised learning, where the model learns how to make correct decisions from a dataset that has been labeled by people. Ghost work refers to the paid, piecework data labeling that humans do so the models can learn correct decisions: for instance, labeling images, flagging X-rated content, tagging text or audio content, proofreading, and much more. You may have done some of this data labeling work for free by completing a reCAPTCHA identifying all the bikes or traffic lights in a photo in order to sign in to different websites.

The decade or so of academic research emerging around this topic gives an opportunity to understand these work conditions as well as the experiences of people who go into and out of participation in these platforms. Three themes connect into the “Skeleton Crew” story, and offer some visibility into this work experience.

First, many but not all of these work settings are subject to “algorithmic management,” which includes functions such as automated hiring and termination and also gamification of performance evaluation, with scores linked to wages. Here in Silicon Valley, such automated management functions are framed as enabling “scale,” because human supervisors or evaluators are no longer needed. In “The Skeleton Crew,” the automated management functions that monitored the workers’ success at scaring visitors, among other things, had a “hostility that was rivaled only by [the] profound shoddiness.” In the story, as in many settings, people working on these platforms experience autotermination with no recourse as particularly cruel. I suspect many of us have encountered some form of “algorithmic cruelty,” such as getting locked out of an online account or getting scammed by a fake flower website, with no options for recourse, no phone number to call, no humans to talk to. Now imagine that your income and livelihood were subject to such automated systems and dehumanizing responses. Or, per Hatim Rahman’s research, imagine losing income and professional status on an automated platform for reasons that will never be explained to you and indeed seem to be intentionally opaque. “The Skeleton Crew” suggests that the shoddy systems and dehumanizing treatment are completely unnecessary and almost puzzlingly so, perhaps because the billionaire company needed to pretend like there were not humans operating the system. The real-world examples of people or companies faking A.I. operations are strange, but not uncommon. One New Zealand company seems to have faked a digital A.I. assistant for doctors, with nonsensical interfaces such as clients needing to email the A.I. system. The founders chided a questioning reporter for choosing “not to believe.” But companies don’t have to make explicitly false claims about A.I. to engage in ghost work. Some academics and activists, including Lilly Irani, have argued that many human-in-the-loop automated systems such as Amazon Mechanical Turk rely on the invisibility of the people involved because it seems to make the technology appear more advanced and autonomous than it actually is, and the rhetoric and system design try to create invisibility of the human work.ADVERTISEMENT

Second, despite some of these cultural conditions and system designs, these work settings, like almost any, are collaborative and social and meaningful. Take, for example, the Uber and Lyft drivers banding together to game the algorithmically managed prices. Such collaboration is common, even on the purposefully individualistic crowd platforms. Another of Gray and Suri’s research papers showed the collaboration network created by people working on Amazon Mechanical Turk, where they as “crowdworkers” collaborated to ensure best wages and create social connections (see also the Turkopticon system). Similarly, the Skeleton Crew actively collaborated to create workarounds within the dysfunctional system, including dividing up the Closet Skeleton shifts because the gamified “Scare-O-Meter” was so bad for this role that it typically meant no wages (until, hilariously, one of them realized the Scare-O-Meter registered a mop as a frightened human face and the whole team could get paid for Closet Skeleton shifts again). Because of their collaboration and how they had “clawed their way together” through this bizarre system, it would have been catastrophic to lose a co-worker in what might seem like individualistic jobs.

Third, “The Skeleton Crew” gives insight into these work worlds through its lively examples of how amazingly good humans are at improvisation and at developing situated expertise, capabilities that remain difficult for automated systems. Lucy Suchman and colleagues have several books and articles that analyze humans’ improvisation and situated expertise, and “The Skeleton Crew” illustrates these ideas with fun details: The team figures out how far the Dragonsulla has advanced through the haunted house by spotting a bit of her star eyeshadow in the malfunctioning bizarro A.I. profiles on the wall; Cheesella knows she can eject one of her cheap plastic skeleton hands to distract the bad guys and also thinks to pull the fire alarm when she realizes her remote-controlled skeleton self has no way of communicating with the people in the room. The Skeleton Crew’s understanding of the idiosyncratic context, and the collaborative improvisation that it took for its members to expertly use the setting to thwart the attack, offers a fun and realistic take on how groups of people work together, even when completely remote and even when mediated through virtual communication.

These themes in the story give us insight into these work conditions and start us thinking on the more complicated second question the story asks us to consider: As society begins to better see and understand the potential cruelties of ghost work conditions, is there anything that can to be done? Gray sometimes likens the current moment to when society began to really understand the realities of child labor and the urgent need for more protective laws. She argues that what is needed is regulation—specifically, regulation that recognizes a new “form of employment that does not fit in full-time employment or fully in part-time employment or even clearly in self-employment.” Such regulations involve getting the new classification of employment right, and also ensuring needed provisions and benefits for all kinds of relevant work, even as technologies and jobs and employment statuses change. Pressing for this new employment classification, and related provisions and regulations, requires us to see work conditions that have not been easily visible, and also for companies to recognize that these are not merely temporary work conditions “on the way to automation” and to take action. Hopefully “The Skeleton Crew” helps begin or continue this awareness and conversation.

Future Tense is a partnership of Slate, New America, and Arizona State University that examines emerging technologies, public policy, and society.